Cybersecurity ventures stated that cybercrime is expected to grow by 15% every year. They predicted that those crimes might cost $10.5 trillion annually on a global scale by the year 2025. Security testing plays a vital role in preventing most of these crimes.

In this article, let’s look at five security testing challenges and how to tackle them.

Generally, security requirements are not given equal importance as functional requirements. Sometimes security requirements are considered post-developing certain modules and, in some cases, it is before pre-release. Not defining the security requirements prior makes security testing difficult.

Solution:

Functional test cases are typically given a high level of importance. Even though we write positive and negative cases, it doesn’t have quality test data to cover security use and misuse cases. Sometimes test cases are written before security testing starts. In some cases, security testing is done ad hoc without writing any test cases.

Delaying the test case writing reduces the efficiency and effectiveness of security testing. Since we follow agile methodology, updating the test case based on the new or modified requirements is essential.

Solution:

API security is as important as front-end security. Most of the attacks that happened through security testing at the API level, remained unnoticed unless we were given access to the database. Also, APIs might have some sensitive data exposed to unauthorized users.

APIs are developed early. Therefore, API security testing ensures the basic security requirements are met.

Solution:

This is the biggest challenge. We decided to implement security testing and started self-learning. But there were only a few resources online. Security testing is vast, and we weren’t sure about the proper methods.

Outsourcing might seem a good option but handing the security testing solely to an external team is not preferable. It would be time-consuming, and we might miss some critical security bugs.

An internal team can perform effective preliminary testing as they are aware of the sensitive data to be protected, source code, and requirements.

Solution:

There are various tools available for security testing. The skillset of the tester and the budget determine the selection of tools. Initially, I was using OWASP ZAP. Then I started using Burp Suite on a tech lead’s suggestion.

ZAP is best for API security testing. It is more convenient to get and view all APIs in a site tree using ZAP. Burp Suite is the most preferred tool by most web security testers. Burp Suite’s intercepting proxy lets us view and modify requests/responses conveniently and shows how UI responds to them.

Solution:

Due to time constraints, when a secure software development cycle isn’t practised, it is only possible to do ad hoc testing for security. Since the security requirements are not defined, and the sensitivity of the data is not recorded anywhere, it is tough to conclude a security bug. So, the confidence in security test coverage is low. This could lead to an unpredictable release.

We could easily achieve the basic level of security of an application in a new project if we follow the above solutions. In an ongoing project/product, it is partially feasible to implement the BDD method of agile security testing.

Defining security requirements early would avoid conflicts and confusion in the later phases and minimizes security-related bugs. It improves the accountability of the internal team on the security of the application, thereby resulting in a predictable release with minimum cost, stress, and risk.

Authored by Shamphavi Shanmugasundram @ BISTEC Global

The CIA triad is a high-level checklist to evaluate security procedures. It is a vital model used to assess the risks, threats, and vulnerabilities of the system.

The CIA triad, also known as the AIC triad, is a benchmark model to assess Information Security. It guides security professionals in protecting any asset that is valuable for running a business.

Security testing should start from the requirement phase in the Software development lifecycle, and it should be integrated into every phase in SDLC.

CIA triad is helpful in identifying the information to be protected and in defining security requirements needed for an application.

CIA Triad stands for Confidentiality, Integrity, and Availability.

Confidentiality ensures that the system or data is exposed to the right users. This could be ensured by having the following four security measures in an application. They are Identification, Authentication, Authorization, and Encryption.

The confidentiality of an application is assessed by knowing whether the sensitive information in a system is disclosed to unauthorized users. The sensitivity of data is a measure of its importance. The owner of the data decides the sensitivity measure of the data.

Integrity ensures that the data is reliable. The system or data should be accurate, complete, and consistent.

The integrity of the data could be ensured by having proper security measures in our system. Some of them are Hashing, User access controls, Version control, Backup and recovery procedures, Error checking, and Data validation.

The integrity of the data is measured using a factor called the baseline. The baseline is the measure of the current state of the data. The goal of integrity is to preserve the baseline throughout the transaction.

The data or system should be available to the users when and wherever it’s needed. The availability of the application could be ensured by using Hardware maintenance, Software patching/upgrading, Load balancing, and Fault tolerance.

Appropriate levels of availability should be provided based on the criticality of the data. Criticality is the measure of the dependency of a system on data to perform its operation.

CIA triad helps in understanding and prioritizing the severity of a vulnerability in an application. Neglecting the CIA triad makes the application vulnerable to Interruption, Interception, Modification, or Fabrication class of attack.

CIA serves as a yardstick to evaluate the security of an application. If CIA triad standards were met, then the security of that system would be stronger and better equipped to handle threats.

Is CIA Triad enough for Information Security? – No, but it covers most of the security loopholes in an application.

Authored by Shamphavi Shanmugasundram @ BISTEC Global

This article explains how to develop a real-time data integration using Azure resources and a python program. Using Azure event hub & Azure stream analytics, we are going to retrieve real-time stock details to the Power BI dashboard.

Sign in to the Azure portal and navigate to your resource group section. Then create a resource group under your subscription.

Search Azure Event Hub in the marketplace and create an event hub namespace.

Now create an event hub under the event hub namespace and also you can configure partition count as well.

To communicate event hubs with the python program, we have to create an access policy under the event hubs namespace. Go to Shared access policies in the left side panel.

Then create a shared access policy with the “Manage” option and save.

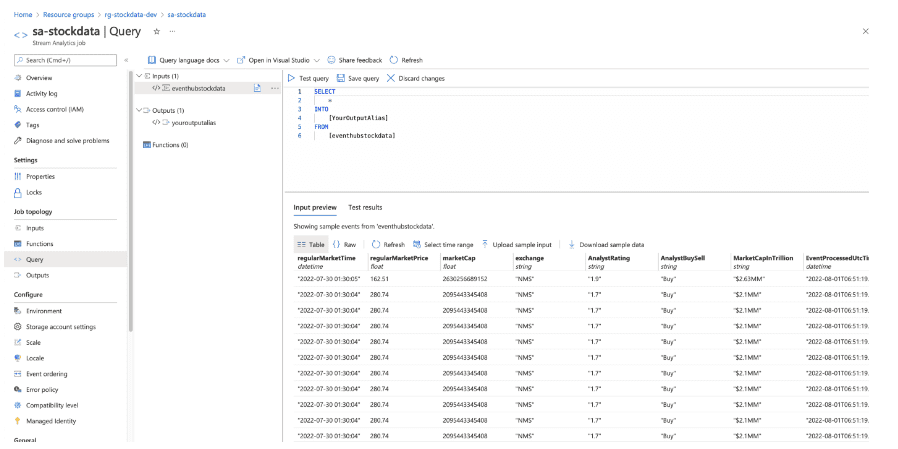

Search Azure Streaming Analytics in the marketplace and create. And remember to select the same geographical location as an Azure event hub namespace. Using stream analytics, you will be able to access data sent through the Azure event hub.

After Creating a streaming analytics job, we have to configure the input for the stream analytics job. Go to the input section under the job topology and set up the event hub configuration.

Using the Python program, we are going to retrieve stock information and send that information to azure using an azure event hub configured in our azure account.

Import the below libraries to develop a python program

from dis import findlinestarts

import pandas as pd

import json

from yahoo_fin import stock_info as si

from azure.eventhub.aio import EventHubProducerClient

from azure.eventhub import EventData

from azure.eventhub.exceptions import EventHubError

import asyncio

from datetime import datetime

import warnings

warnings.filterwarnings("ignore", category=FutureWarning)

warnings.filterwarnings("ignore", category=DeprecationWarning)Python program to send stock information to an azure event hub

con_string = 'Event Hub Connection String'

eventhub_name = 'Event Hub Name'

def get_stock_data(stockName):

stock_pull = si.get_quote_data(stockName)

stock_df = pd.DataFrame([stock_pull],columns=stock_pull.keys())[['regularMarketTime','regularMarketPrice','marketCap','exchange','averageAnalystRating']]

stock_df['regularMarketTime'] = datetime.fromtimestamp(stock_df['regularMarketTime'])

stock_df['regularMarketTime'] = stock_df['regularMarketTime'].astype(str)

stock_df[['AnalystRating','AnalystBuySell']] = stock_df['averageAnalystRating'].str.split(' - ',1,expand=True)

stock_df.drop('averageAnalystRating',axis=1,inplace=True)

stock_df['MarketCapInTrillion'] = stock_df.apply(lambda row: "$" + str(round(row['marketCap']/1000000000000,2)) + 'MM',axis=1)

return stock_df.to_dict('record')

datetime.now()

get_stock_data('AAPL')async def run():

# Create a producer client to send messages to the event hub.

# Specify a connection string to your event hubs namespace and# the event hub name.

while True:

await asyncio.sleep(5)

producer = EventHubProducerClient.from_connection_string(conn_str=con_string, eventhub_name=eventhub_name)

async with producer:

# Create a batch.

event_data_batch = await producer.create_batch()

# Add events to the batch.

event_data_batch.add(EventData(json.dumps(get_stock_data('AAPL'))))

# Send the batch of events to the event hub.

await producer.send_batch(event_data_batch)

print('Stock Data Sent To Azure Event Hub')

loop = asyncio.get_event_loop()

try:

loop.run_until_complete(run())

loop.run_forever()

except KeyboardInterrupt:

pass

finally:

print('Closing Loop Now')

loop.close()Once we completed the python program, we can see the python program sending data to an azure event hub. To verify that, go to Azure Stream Analytics Job> Query section.

In this section, we are going to develop a Power BI dashboard using real-time data coming through an azure event hub.

To Create a Power BI dashboard, navigate to the output option under “Job Topology” and add the Power BI option as below.

Then Configure the below information and authorize to create of a Power BI dataset in your workspace

Now go to Power BI workspace and you can see the dataset created using the stream analytics job.

To create a dashboard, Go to the new and select the dashboard option in the menu section, and type the name for your Power BI dashboard.

Now we can add visuals to the Power BI report and to that, go to the edit section and select the “Add Tile” option.

Select the “Custom Streaming Data” option and select the dataset we created using Azure Stream Analytics Job.

Now you can add multiple Cards, Line Charts, etc depending on your requirement. There are only a few visuals available for streaming analytics data but we will be able to create a meaningful dashboard using available components.

Authored by Janaka Nawarathna @ BISTEC Global

The faster your page loads, the longer visitors spend time on your website. Here is a new framework that makes the loading time of your web page 10X faster. This amazing technology is AMP or Accelerated Mobile Pages. AMP optimizes a web page’s loading speed time to just a few seconds.

AMP is an open-source HTML framework developed by the AMP Open-Source Project. Google created it as a competitor to Facebook Instant Articles and Apple News. It is optimized for mobile web browsing and designed to help web pages load faster. And AMP pages can be cached by a CDN, such as Microsoft Bing or Cloudflare’s AMP caches, allowing pages to be served speedily.

Usually, mobile pages have problems like slow loading, unresponsive pages and content displaced by ads et cetera. Studies show that,

Maintaining a fast mobile website to overcome these problems on your own often requires large development teams or specialized skill sets.

AMP helps to overcome these issues as a smart solution. It does not require a large development team budget. And to top it off, it is compatible with all modern browsers!

With AMP you can have,

For developers and publishers, it creates opportunities for distribution on Google, Bing, Twitter, LinkedIn, Pinterest etc.

Accelerated Mobile Pages are built of 3 components – AMP HTML, AMP JS, and AMP Cache.

AMP Cache helps with validating AMP pages with fetched cache. AMP is component-based and is not dependent on any special tooling. All components are with Standard HTML, CSS & JS.

AMP provides a static layout and minimum size stylesheet with inline & size-bound CSS.

“Hello World” with AMP

Authored by Evantha Harshana @ BISTEC Global